The Core Problem: Bot Traffic Isn’t Evenly Distributed

One of the most common questions we hear is simple: why does one brand see 1% bot traffic, while another sees 20% or even 30%?

At first glance, it feels random. Two brands can be in the same category, on the same platform, even operating at similar scale—yet their traffic quality looks completely different.

But bot traffic doesn’t distribute evenly. It concentrates. Some brands operate with relatively clean traffic for long stretches, while others experience sudden spikes that push bot traffic into double digits almost overnight.

This isn’t luck. It’s exposure.

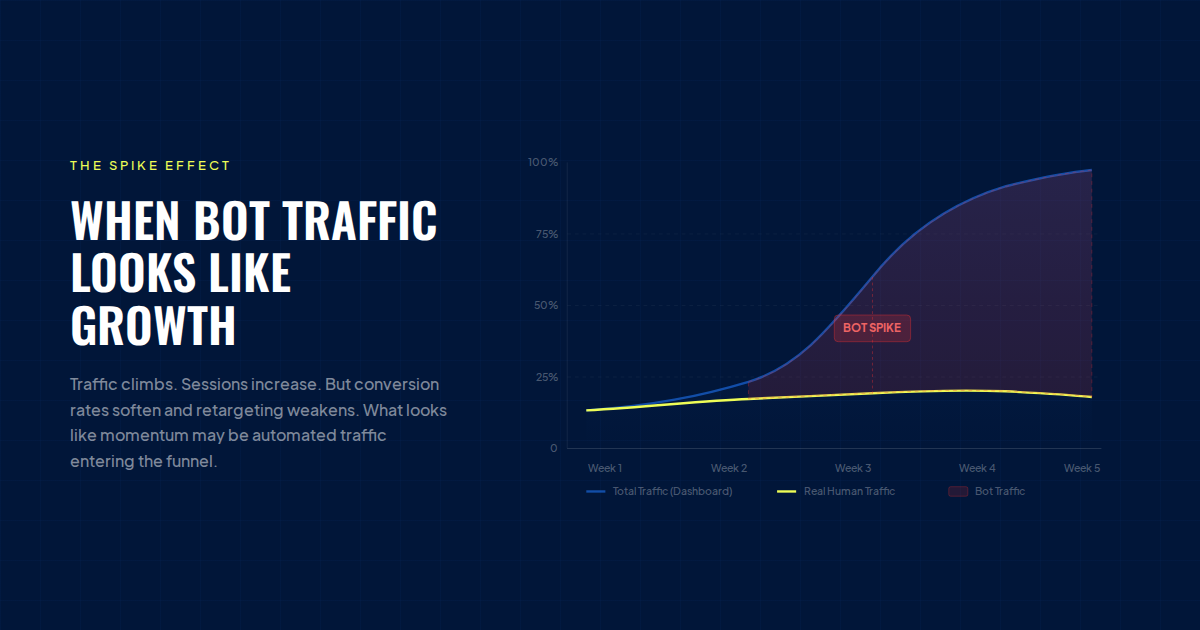

The Spike Effect: When Bot Traffic Looks Like Growth

One of the most dangerous aspects of bot traffic is how easily it can be misread.

A brand might open their dashboard and see traffic climbing, sessions increasing, and overall activity trending upward. On paper, everything points to growth. But underneath, something doesn’t quite add up.

Conversion rates begin to soften. Retargeting performance weakens. Attribution starts to feel inconsistent.

What looked like momentum turns out to be a mix of real users and automated traffic entering the funnel. These spikes rarely happen gradually. Instead, they arrive in waves—often tied to campaign launches, viral moments, or seasonal demand.

We’ve seen brands move from low single-digit bot traffic to 25%+ in just a matter of days, without realizing that a large portion of that “growth” wasn’t human.

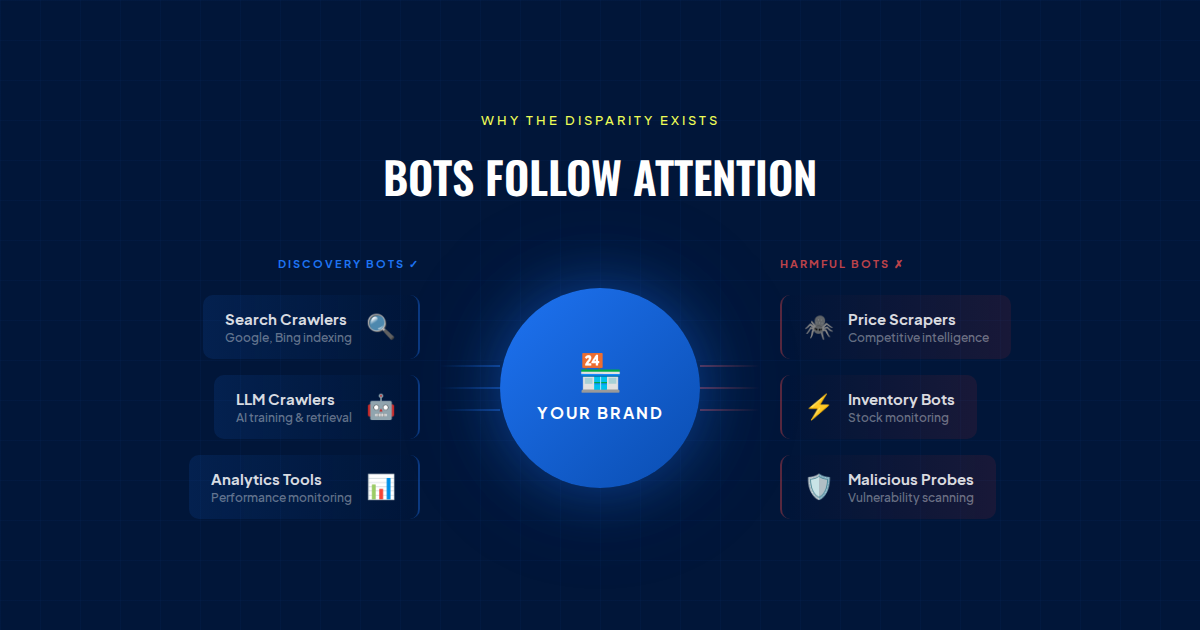

Why the Disparity Exists

The reason behind this gap comes down to one core idea: bots follow attention.

As brands grow, they naturally attract more visibility across paid media, search, and social platforms. But that visibility doesn’t just bring customers—it brings automated systems as well.

Scrapers begin monitoring pricing and inventory. AI crawlers ingest product data. Competitive tools scan your site structure. In some cases, malicious traffic probes for vulnerabilities. The more visible your brand becomes, the more surface area you expose.

This is why two brands with similar revenue can have completely different bot traffic profiles. One may still be under the radar, while the other has become a consistent point of interest for automated systems.

Paid Media and Bot Exposure

Paid media accelerates this effect.

As brands scale spend on platforms like Meta and TikTok, their URLs are distributed more widely. That exposure increases the likelihood of bots interacting with those links—triggering pixels, loading pages, and mimicking engagement patterns.

This doesn’t mean paid media is the problem. It simply means that scale brings complexity. As you grow, so does the mix of traffic hitting your site—and not all of it is high quality.

Seasonality and Sudden Surges

Peak periods like Black Friday and Cyber Monday amplify everything.

Traffic increases, competition intensifies, and bots become more active. Scraping ramps up. Automated systems test site performance. Malicious traffic becomes more aggressive.

During these windows, it’s common for bot traffic to spike significantly—sometimes doubling or tripling baseline levels. Because real demand is also increasing, these shifts are easy to overlook.

When Brands Become Targets

In some cases, bot traffic goes beyond general exposure.

Certain brands become more attractive targets due to their pricing, product demand, or visibility in the market. These brands aren’t just being discovered—they’re being actively interacted with at scale.

And without clear visibility into traffic quality, it’s easy to assume everything is normal.

Why This Matters More Than It Seems

At a surface level, bot traffic might seem like a technical issue. In reality, it’s a performance issue.

As bot traffic increases, analytics become less reliable. Customer acquisition costs can appear inflated. Retargeting audiences become less effective. Even site performance can be impacted by unnecessary load.

Two brands may report similar traffic numbers, but one is operating on clean, high-intent users, while the other is optimizing against noise.

That difference compounds over time.

Why Filtering Bots Isn’t Enough

Platforms like Shopify have started to introduce bot filtering in analytics, which is a meaningful step forward.

But filtering only changes how data is displayed. It doesn’t stop bots from hitting your site.

They still load pages, trigger scripts, and interact with your infrastructure. So while reports may look cleaner, the underlying impact remains.

The Shift: From Visibility to Control

The next evolution for ecommerce brands is moving beyond visibility and into control.

It’s no longer enough to simply identify bot traffic. The real advantage comes from managing it—allowing the bots that drive discovery, like search engines and LLM crawlers, while preventing the ones that distort performance.

This is where solutions like Edge Protect come in. Not as a blunt tool that blocks everything, but as a more precise layer that operates at the edge—helping ensure that the traffic reaching your site is actually valuable.

Final Takeaway: The Difference Between 1% and 30%

The gap between 1% and 30% bot traffic isn’t random.

It’s driven by visibility, growth, and how much attention your brand is attracting across the internet. As that attention increases, so does your exposure to automated traffic.

The brands that win aren’t the ones with the most traffic.

They’re the ones with the best traffic quality.

.svg)

.svg)

.svg)

.svg)